This is a small presentation about how, at Forex Academy, you could discover the secrets behind risk control; and how position size could affect your profits and your probability of ruin.

Forex Academy’s Educational Library consists of the finest collection of written and video pieces of learning material. It focuses on every relevant aspect of trading.

This is a small presentation about how, at Forex.Academy, you could discover the secrets behind risk control; and how position size could affect your profits and your probability of ruin.

This is a small presentation about how, at Forex.Academy, you could discover the secrets behind risk control; and how position size could affect your profits and your probability of ruin.

This is a small presentation about how, at Forex Academy, you could discover the secrets behind risk control; and how position size could affect your profits and your probability of ruin.

The AUDUSD pair in daily chart is moving bearish as an A-B-C pattern, from the highest level reached on January 25 (0.81358 level). The segment BC developed a bullish divergence, which alerts us to the exhaustion of the bearish movement, this divergence is not a reversal signal. On the last sequence of the wave C, the price tested two times the 0.73179 level (FE 141.4 level).

In the 4-hour chart, the wave between B and C has fallen in five waves from 0.79163 to 0.73105 on July 02. From this level, the Aussie started an internal bullish move which reached the 0.74838 level, surpassing the previous high 0.74438 in time and shape. Currently, the price is moving in a range which could be the start of a new bullish cycle.

For the coming sessions, we foresee new upsides which could be activated as long as the price bounce from the area between 0.73562 and 0.73265, with a potential profit target area between 0.75382 and 0.75956. The invalidation level of this scenario is 0.73105.

Forex Daily News: In this post, we analyse the Canadian Dollar group against their main currencies. As a summary, the second half of the year and 2019, we foresee a corrective movement in the Canadian currency, which could come supported for a correction in oil prices to, then, give way to a new rally. After this correction, our central vision for the Canadian Dollar is a new appreciation scenario.

Additionally, we observe that it is likely that GBP and EUR would show the best performance against the CAD; on the opposite side, the Japanese currency and the Swiss Franc could have the worst performance against the Canadian Dollar.

The USDCAD is developing a complex corrective structure of a second bullish impulsive wave. The corrective structure has a bearish bias, which could find support in the area between 1.29607 to 1.28371. The key level to watch out is 1.2884, this level should convert on a critical pivot level (HHL).

EURCAD cross in the short-term has a bearish bias, probably could see new lows in 1.50 zone. In the mid-term, the cross moves sideways as a complex corrective. In the long-term, we foresee that the EURCAD could find fresh lows in the area between 1.47822 to 1.45662, from where the cross could start a new rally as a fifth bullish wave. Invalidation level is at 1.4442.

Probably the GBPCAD cross shows the clearest movement of the CAD Group. The price is moving in a bearish A-B-C sequence, which could find support at 1.6410 level, from where the price could create a new connector and then initiate a rally. The new bullish sequence has a target the area between 1.8533 and 1.9266. Invalidation level is at 1.5837.

The CADJPY cross has been commented in a previous analysis, and we maintain the main idea which consists in to seek only long positions with a long-term profit target in the area between 94.69 and 95.30. Is probably that the cross makes a retrace to the area between 85.45 to 83.73 from where we could find new opportunities to incorporate us into the trend. Invalidation level is located at 82.17.

In the CADCHF cross, the lemma is “Buy the Dips” or “Watch the Breakout.” CADCHF is running sideways in an upper degree consolidation structure. The key level to control is 0.7636, after the breakout of this level, we expect more upsides to the zone between 0.7992 and 0.8245. In case that the price makes a false breakdown to the area between 0.7394 and 0.7289, it could be an attractive opportunity to look for the long side. Invalidation level is at 0.7124.

In the long-term, NZDCAD is running sideways and making lower highs. The long-term pivot level is at 0.8640. For this cross, we expect only short positions; if the price makes a bullish move, the potential movement is limited to the area between 0.9253 to 0.9461. The long-term target area is between 0.8401 to 0.8098.

Probably the AUDCAD cross is the less attractive to trade. As we can see in the weekly chart, it is running sideways since the second half of 2013. The price is moving inside a bearish cycle, which could find support in the “long-term pivot level” at 0.8919, from where AUDCAD could start to bounce. The invalidation level for the bearish cycle is at 1.0397.

Forex Daily News: Finally, as a technical note, considering that the AUDCAD is mostly bearish, by correlation, the CAD should perform better than the AUD for the period foreseen.

Introduction

Traders want to win. Nothing else matters to them; and they think and believe the most important question is timing the entry. Exits don’t matter at all, because if

Introduction

Traders want to win. Nothing else matters to them; and they think and believe the most important question is timing the entry. Exits don’t matter at all, because if

Traders want to win. Nothing else matters to them; and they think and believe the most important question is timing the entry. Exits don’t matter at all, because if they time the entry, they could easily get out long before a retracement erases their profit. O so they believe.

That’s the reason there are thousands of books about Technical Analysis, Indicators, Elliott Wave Forecasting, and so on, and just a handful of books on psychology, statistical methods, and trading methodology.

The problem lies within us, not in the market. The truth is not out there. It is in here.

There are a lot of psychological problems that infest most of the traders. One of the most dangerous is the need to be right. They hate to lose, so they let their losses run hoping to cover at a market turn and cut their gains short, afraid to lose that small gain. This behavior, together with improper position sizing is the cause of failure in most of the traders.

The second one is the firm belief in the law of small numbers. This means the majority of unsuccessful traders infer long-term statistical properties based on very short-term data. When his trading system enters in a losing streak, they decide the system doesn’t work, so they look for another system which, again, is rejected when it enters in another losing sequence and so on.

There are two problems with this approach. The first one is that the trading account is constantly consumed because the trader is discarding the system when sits at its worst performance, adding negative bias to his performance every time he or she switches that way. The second one is that the wannabe trader cannot learn from the past nor he can improve it.

This article is a rough approach to the problem of establishing a trading methodology.

1.- Diversification

The first measure a trader should take is:

What’s the advantage of a diversified portfolio:

The advantage of having a diversified portfolio of assets is that it smooths the equity curve and, and we get a substantial reduction in the total Drawdown. I’ve experienced myself the psychological advantage of having a large portfolio, especially if the volatility is high. Losing 10% on an asset is very hard, but if you have four winners at the same time, then that 10% is just a 2% loss in the overall account, that is compensated with, maybe, 4-6% profits on other securities. That, I can assure you, gave me the strength to follow my system!.

The advantage of three or more trading systems in tandem is twofold. It helps, also improving overall drawdown and smooth the equity curve, because we distribute the risk between the systems. It also helps to raise profits, since every system contributes to profits in good times, filling the hole the underperforming one is doing.

That doesn’t work all the time. There are days when all your assets tank, but overall a diversified portfolio together with a diversified catalog of strategies is a peacemaker for your soul.

2.- Trading Record

As we said, deciding that a Trading System has an edge isn’t a matter of evaluating the last five or ten trades. Even, evaluating the last 30 trades is not conclusive at all. And changing erratically from system to system is worse than random pick, for the reasons already discussed.

No system is perfect. At the same time, the market is dynamic. This week we may have a bull and low volatility market and next one, or next month, we are stuck in a high-volatility choppy market that threatens to deplet our account.

We, as traders need to adapt the system as much as is healthy. But we need to know what to adjust and by how much.

To gather information to make a proper analysis, we need to collect data. As much as possible. Thus, which kind of data do we need?

To answer this, we need to, first look at which kind of information do we really need. As traders, we would like to data about timing our entries, our exits, and our stop-loss levels. As for the entries we’d like to know if we are entering too early or too late. We’d like to know that also for the profit-taking. Finally, we’d like to optimize the distance between entry and stop loss.

To gather data to answer the timing questions and the stop loss optimum distance the data that we need to collect is:

All the above concepts are well known to most investors, except, maybe, the two bottom ones. So, let me focus this article a bit on them, since they are quite significant and useful, but not too well known.

MAE is the maximum adverse price movement against the direction of the trend before resuming a positive movement, excluding stops. I mean, We take stops out of this equation. We register the level at which a market turn to the side of our trade.

MFE is the maximum favourable price movement we get on a trade excluding targets. We register the maximum movement a trade delivers in our favour. We observe, also, that the red, losing trades don’t travel too much to the upside.

Having registered all these information, we can get the statistical evidence about how accurate our entry timing is, by analysing the average distance our profitable trades has to move in the red before moving to profitability.

If we pull the trigger too early, we will observe an increase in the magnitude of that mean distance together with a drop in the percent of gainers. If we enter too late, we may experience a very tiny average MAE but we are hurting our average MFE. Therefore, a tiny average MAE together with a lousy average MFE shows we need to reconsider earlier entries.

We can, then, set the invalidation level that defines our stop loss at a statistically significant level instead of at a level that is visible for any smart market participant. We should remember that the market is an adaptive creature. Our actions change it. It’s a typical case of the scientist influencing the results of the experiment by the mere fact of taking measurements.

Let’s have a look at a MAE graph of the same system after setting a proper stop loss:

Now All losing trades are mostly cut at 1.2% loss about the level we set as the optimum in our previous graph (Fig 2). When this happens, we suffer a slight drop in the percent of gainers, but it should be tiny because most of the trades beyond MAE are losers. In this case, we went from 37.9% winners down to 37.08% but the Reward risk ratio of the system went from results 1.7 to 1.83, and the average trade went from $12.01 to $16.5.

In the same way, we could do an optimization analysis of our targets:

We observed that most of the trades were within a 2% excursion before dropping, so we set that target level. The result overall result was rather tiny. The Reward-to-risk ratio went to 1.84, and the average trade to 16.7

These are a few observations that help us fine-tune our system using the statistical properties of our trades, together with a visual inspection of the latest entries and exits in comparison with the actual price action.

Other statistical data can be extracted from the tracking record to assess the quality of the system and evaluate possible actions to correct its behaviour and assess essential trading parameters. Such as Maximum Drawdown of the system, which is very important to optimize our position size, or the trade statistics over time, which shows of the profitability of the system shrinks, stays stable or grows with time.

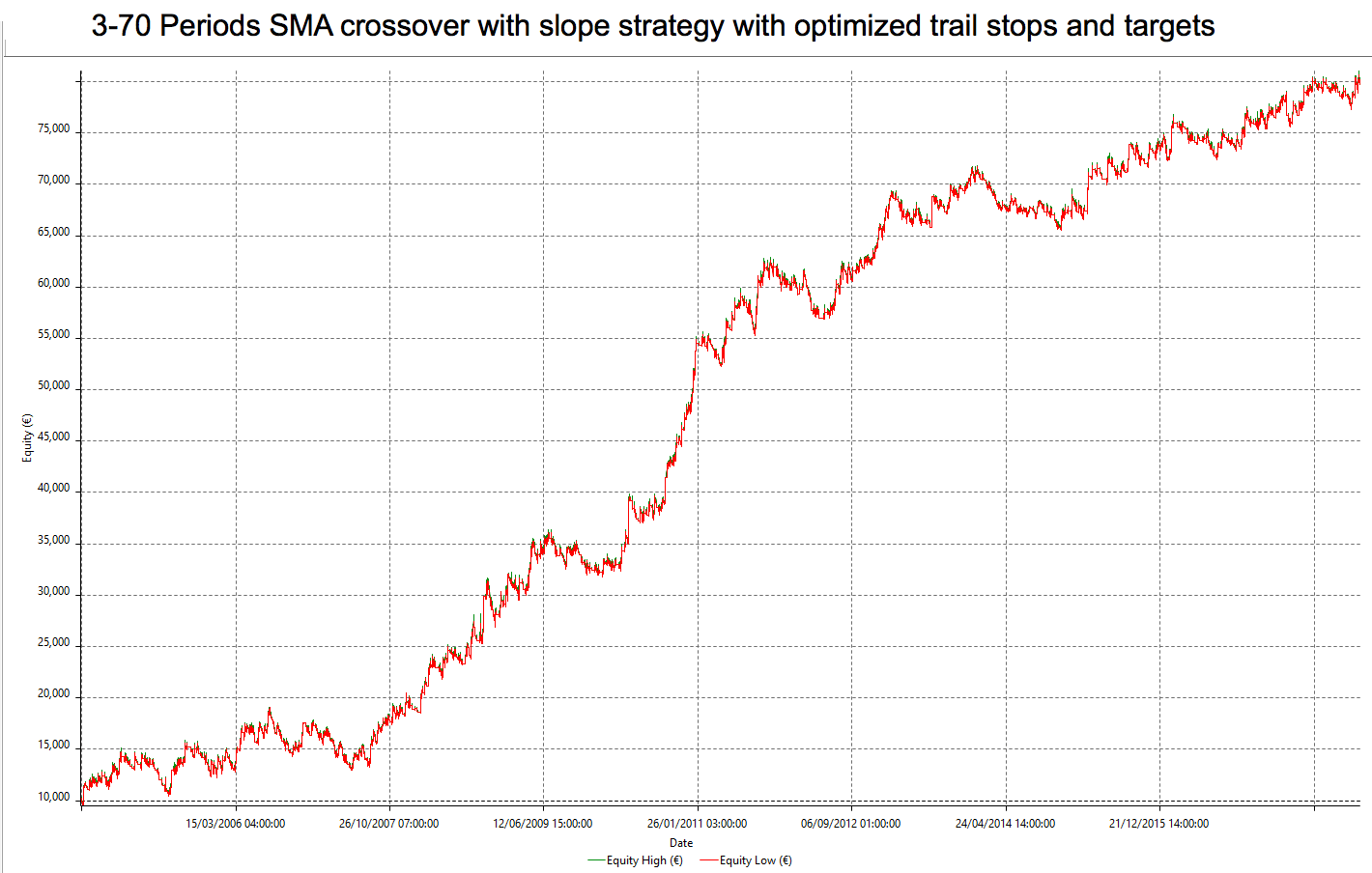

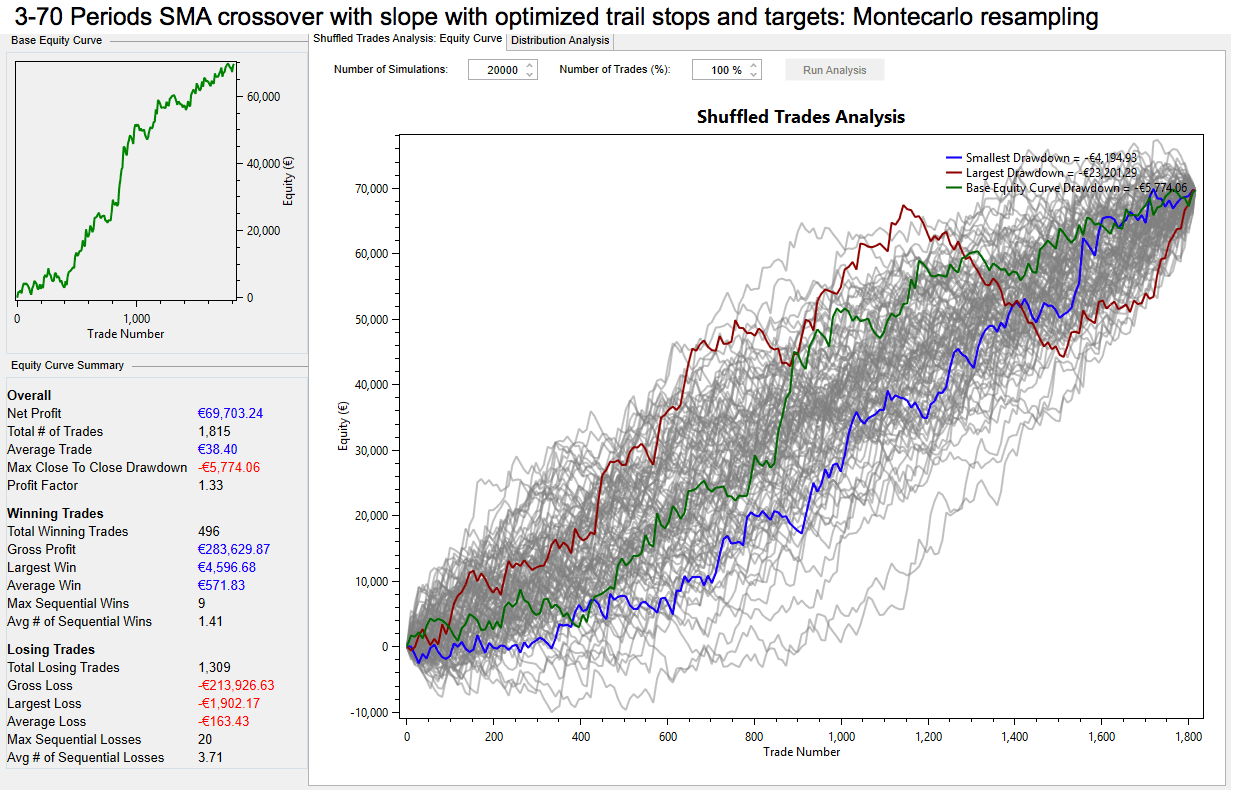

This kind of graph can be easily made on a spreadsheet. This case shows 12 years of trading history as I took it from a MACD trading system study as an example.

Of course, we could use the track record to compute derived and valuable information, to estimate the behaviour of the system under several position sizes, and calculate its weekly or monthly results based in the estimation, along with the different drawdown profiles shaped. Then, the trader could decide, based upon his personal tolerance for drawdown, which combination of Returns/drawdown fit his or her style and psychological tastes.

The point is, to get the information we must collect data. And we need information, a lot of it, to avoid falling into the “law of small numbers” fallacy, and also to optimize the system and our risk management.

Note: All images were produced using Multicharts 11 Trading Platform’s backtesting capabilities.

The Nature of Risk and Opportunity

Trading literature is filled with vast amounts of information about market knowledge: fundamentals, Central Banks, events, economic developments and technical analysis. This information is

The Nature of Risk and Opportunity

Trading literature is filled with vast amounts of information about market knowledge: fundamentals, Central Banks, events, economic developments and technical analysis. This information is

Trading literature is filled with vast amounts of information about market knowledge: fundamentals, Central Banks, events, economic developments and technical analysis. This information is believed necessary to provide the trader with the right information to improve their trading decisions.

On the other hand, the trader believes that success is linked to that knowledge and that a trade is good because the right piece of knowledge has been used, and a bad trade was wrong because the trader made a mistake or didn’t accurately analyse the trading set-up.

The focus in this kind of information leads most traders to think that entries are the most significant aspect of the trading profession, and they use most of their time to get “correct” entries. The other consequence is that novice traders prefer systems with high percent winners over other systems, without more in-depth analysis about other aspects.

The reality is that the market is characterized by its randomness; and that trading, as opposed to gambling, is not a closed game. Trading is open in its entry, length, and exit, which gives room for uncountable ways to define its rules. Therefore, the trader’s final equity is a combination of the probability of a positive outcome – frequency of success- and the outcome’s pay-off, or magnitude.

This latest variable, the reward-to-risk ratio of a system, technically called “the pay-off” but commonly called risk-reward ratio, is only marginally discussed in many trading books, but it deserves a closer in-depth study because it’s critical for the ultimate profitability of any trading system.

To help you see what I mean, Figure 1 shows a game with 10% percent winners that is highly profitable, because it holds a 20:1 risk-reward ratio.

A losing game is also possible to achieve with 90% winners:

So, as we see, just the percentage winners tell us nothing about a trading strategy. We need to specify both parameters to assess the ultimate behaviour of a system.

Let’s call Rr the mean risk-reward of a system. If we call W the average winning trade and L the average losing trade then Rr is computed as follows:

Rr = W/L

If we call minimum P the percent winners needed to achieve profitability, then the equation that defines if a system is profitable in relation to a determined reward-risk ratio Rr is:

P > 1 / (1 +Rr) (1)

Starting from equation (1) we can also get the equation that defines the reward-risk needed to achieve profitability if we define percent winners P:

Rr > (1-P) / P (2)

If we use one of these formulas on a spreadsheet we will get a table like this one:

When we look at this table, we can see that, if the reward is 0.5, a trader would need two out of three winning trades just to break-even, while they would require only one winner every three trades in the case of a 2:1 payoff, and just one winner every four trades if the mean reward is three times its risk.

Let’s call nxR the opportunity of a trade, where R is the risk and n is the multiplier of R that defines the opportunity. Then we can observe that:

A high Rr ratio is a kind of protection against a potential decline in the percentage of winning trades. Therefore, we should make sure our strategies acquire this kind of protection. Finally, we must avoid Rr’s under 1.0, since it requires higher than 50% winners, and that’s not easy to attain when we combine the usual entries with stop-loss protection.

One key idea by Dr. Van K. Tharp is the concept of the low-risk idea. As in business, in trading, a low-risk idea is a good opportunity with moderate cost and high reward, with a reasonable probability to succeed. By using this concept, we get rid of one of the main troubles of a trader: the belief that we need to predict the market to be successful.

As we stated in point 3 of lessons learned: we don’t need to predict. You’ll be perfectly well served with 20% winners if your risk reward is high enough. We just need to use our time to find low-risk opportunities with the proper risk-reward.

We can find a low-risk opportunity, just by price location as in figure 3. Here we employ of a triple bottom, inferred by three dojis, as a fair chance of a possible price turn, and we define our entry above the high of the latest doji, to let the market confirm our trade. Rr is 3.71 from entry to target, so we need just one out of four similar opportunities for our strategy to be profitable.

Finally, we should use Rr as a way to filter out the trades of a system that don’t meet our criteria of what a low-risk trade is.

If, for instance, you’re using a moving average crossover as your trading strategy, by just filtering out the low profitable trades you will stop trading when price enters choppy channels.

As we observe in Fig 3, the risk is defined by the distance between the entry price and the stop loss level, and the reward is the distance between the projected target level defined by the distance from the Take profit level to the entry price:

Risk = Entry price– Stop loss

Reward = Take profit – Entry price.

Rr = Reward / Risk

In this case,

Entry price = 1.19355

Stop loss = 1.19259

Take profit = 1.19712

Therefore,

Risk = 1.19355 -1.19259 = 0.00096

Reward = 1.19712 – 1.19355 = 0.00357

Rr = 0.00357 / 0.00096

Rr = 3.7187

©Forex.Academy

In this editorial we are going to discuss decentralized exchanges, why they exist or why are they going to exist, what are the advantages, what aren’t people still using them, and we will have an overview of the current projects that are striving to solve these problems.

Let’s start off with explaining how normal (centralized exchanges) work and what’s their purpose. This will serve as an introduction to the problem which decentralized exchanges tend to solve.

Centralized exchanges act as a third party matchmaker between a buyer and a seller of an asset. They are useful because they provide liquidity. What is also important is that this process of trading is speeded up by the convenience of having an account in which you have you deposited funds, which are held by the exchange.

>Liquidity describes the degree to which an asset or security can be quickly bought or sold in the market without affecting the asset’s price.

Market liquidity refers to the extent to which a market, such as a country’s stock market or a city’s real estate market, allows assets to be bought and sold at stable prices. Cash is considered the most liquid asset, while real estate, fine art, and collectibles are all relatively illiquid.

Accounting liquidity measures the ease with which an individual or company can meet their financial obligations with the liquid assets available to them. There are several ratios that express accounting liquidity highlighted below.

Source: investopedia.com

The most popular examples of centralized cryptocurrency exchanges are: Coinbase, Kraken, Cex, Bitfinex, Poloniex…

In the cryptocurrency market exchanges are often divided into two types: fiat-crypto gateway (BTC/USD, ETH/USD, LTC/USD…) and altcoins (BTC/ZRX, BTC/DNT, ETH/ADA…)

This is important to point out because later we will discuss some common problems associated with decentralized exchanges (DEX) that are caused by these points.

Unlike centralized exchanges, DEXs aren’t owned by anyone. Instead, they are constructed on the same distributed ledger technology as Bitcoin and utilize smart contracts for order execution. This is important to point out, as this means that they do not hold funds for their beneficiaries or any other relevant information regarding the identity or location.

Some common examples are: Etherdelta, IDEX, Radar Relay.

Centralized exchanges are charging high fees, which is something cryptocurrencies strive to eliminate. They can be hacked which happened numerous time in the past. They have the ownership of your funds, which is something that’s not aligned with the advantages of cryptocurrencies in general (cryptos take pride in the part that people have control of their own money). Crypto-unfriendly governments can cut or ban their operations. For all the above reasons they are viewed as the weakest link in the cryptocurrency market ecosystem.

That’s why decentralized exchanges are offered as a solution to the listed problems. They cannot be hacked, they cannot be tampered with, they are censorship resistant and do not hold your funds.

While DEXs are more beneficial when it comes to security (from hackers and government interference), anonymity and cost, there are still great challenges that they need to overcome in order to compete with their centralized competition. They are still difficult to use for the common person, as you would have to be crypto/tech savvy; they are somewhat limited in functionality and/or limited on the type of a cryptocurrency (for example only ERC20 tokens), and most importantly they don’t guarantee liquidity.

Having liquidity and a large trading volume is most important because we as traders are all about fast execution. And if you have to wait for hours for your order to get filled, by the time you might get fill you aren’t potentially looking at a good buy or sell opportunity.

Because of this, DEXs haven’t been much used, compared to their centralized counterpart. But there are projects out there that are going to solve that problem as well. In the following paragraphs, we will review most promising DEX projects.

0x is a protocol. That means it serves as a layer on top of the Ethereum blockchain for actually building decentralized exchange applications. They describe in their whitepaper that 0x is “a protocol that facilitates low friction peer-to-peer exchange of ERC20 tokens on the Ethereum blockchain. The protocol is intended to serve as an open standard and common building block, driving interoperability among decentralized applications (dApps) that incorporate exchange functionality. Trades are executed by a system of Ethereum smart contracts that are publicly accessible, free to use and that any dApp can hook into. DApps built on top of the protocol can access public liquidity pools or create their own liquidity pool and charge transaction fees on the resulting volume.”

OmiseGO is a project that incorporates many things, but having in mind the focus of this editorial we are going to point out their dex platform.

“OmiseGO is building a decentralized exchange, liquidity provider mechanism, clearinghouse messaging network, and asset-backed blockchain gateway. OmiseGO is not owned by any single one party. Instead, it is an open distributed network of validators which enforce behavior of all participants. It uses the mechanism of a protocol token to create a proof-of-stake blockchain to enable enforcement of market activity amongst participants. This high-performant distributed network enforces exchange across asset classes, from fiat-backed issuers to fully decentralized blockchain tokens (ERC-20 style and native cryptocurrencies). Unlike nearly all other decentralized exchange platforms, this allows for decentralized exchange of other blockchains and between multiple blockchains directly without a trusted gateway token.”

Source: OmiseGO whitepaper

Airswap is similar to 0x in a sense that it will allow users to exchange only ERC20 tokens, and in a sense that it’s a consensus project. The difference is that the transaction on Airswap happens off the chain.

“We present a peer-to-peer methodology for trading ERC20 tokens on the Ethereum blockchain. First, we outline the limitations of blockchain order books and offer a strong alternative in peer-to-peer token trading: off-chain negotiation and on-chain settlement. We then describe a protocol through which parties are able to signal to others their intent to trade tokens. Once connected, counterparties freely communicate prices and transmit orders among themselves. During this process, parties may request prices from an independent third party oracle to verify accuracy. Finally, we present an Ethereum smart contract to fill orders on the Ethereum blockchain.”

Source: Airswap whitepaper

Kyber network is my favorite project as they tend to emulate the exact same functionalities and user experience as centralized exchanges.

We design and build KyberNetwork, an on-chain protocol which allows instant exchange and conversion of digital assets (e.g. crypto tokens) and cryptocurrencies (e.g. Ether,

Bitcoin, ZCash) with high liquidity. KyberNetwork will be the first system that implements several ideal operating properties of an exchange including trustless, decentralized execution, instant trade and high liquidity.

The only thing that’s different is that they don’t have the order book in order to finally solve the liquidity issue.

Instead of maintaining a global order book, we maintain a reserve warehouse which holds an appropriate amount of crypto tokens for purposes of maintaining exchange liquidity. The reserve is directly controlled by the Kyber contract, and the contract has a conversion rate for each exchange pair of tokens by fetching from all the reserves. The rates are frequently updated by the reserve managers, and Kyber contract will select the best rate for the users. When a request to convert from token A to token B arrives, the

Kyber contract checks if the correct amount of token A has been credited to the contract, then sends the corresponding amount of token B to the sender’s specified address. The

amount of token A, after the fees, is credited to the reserve that provides the token B.

Source: Kyber Network whitepaper

Having experienced these problems of centralized exchanges early on, cryptocurrency ecosystem has already come up with the solution – decentralized exchange applications. They are still far from perfect but as you can see from these promising examples, some major obstacles are already being solved as well. First generation failed but offered a great insight on how and where to look for progress. That’s the beauty of the free market – problems are being solved and those who can’t compete are left behind.

The greatest threat of centralized exchanges aren’t the reasons I’ve listed. The greatest power centralized exchanges have is maker manipulation. They collect so many cryptos through fee’s that they can manipulate the price in many ways. They also have awareness of the order book flow that they can use to their advantage.

Something like that happened on October 8. last year on Bittrex exchange. Even though they denied the accusations of market manipulation, the research done by The CryptoSyndicate Research Lab paint a different story.

For more check out the original post:

https://thecryptosyndicate.com/opinion-bittrex-anomaly/

In the spirit of decentralization which cryptocurrencies carry and promise, in order to achieve taking power back from centralized entities, decentralized exchanges are emerging widely. It is up to us to choose what’s best for us, so I have not doubt in my mind that DEXs will become a new standard in the near future, but only after they offer easy user experience and liquidity.

Having said that, and having in mind the projects that are already out there, “near future” may be sooner than we think.

©Forex.Academy

Cryptocurrency market is still in its infancy stage. That means that the industry around it is too. People all around the world are jumping at the opportunity to take the piece of the pie, and whoever gets it first is his. This results in a great influx of financial layman’s offering advice, bringing news and teaching you how to trade, especially on youtube where people first come for information because it’s easier to accept it in a video format.

A sentence I commonly hear among those who offer this information is: This is not financial advice, do your own research. They say this in order to legally protect themselves, yet they do offer financial advice. That’s why doing your own due diligence in the cryptocurrency market is crucial. And to assist you in that I’ve created a template for you to evaluate your source of information.

I’ve divided them into three categories: news, ico’s and analysis.

Regarding news:

1.1 Is it a direct source (e.g., projects website or other social media outlets such as medium, (sub)Reddit, Twitter) (good)

1.2 Is it indirect source (news website) (neutral)

2.1 Entertainment (neutral)

2.2 To inform (good)

2.3 Advertisement and/or interest (bad)

Your goal should be to be the closest to the source as possible. If you are interested in latest developments of your favourite crypto project, join their telegram group, or slack channel. Every established project has one. If not go to Reddit and find their subreddit, where admins are the official community managers for the project. Many of them also have a blog on Medium where they update their readers on a regular basis.

Regarding ICO recommendation:

1.1 Does it come from someone within the space (good)

1.2 Does it come from someone outside the space (neutral)

2.2.1 To promote because of his interest (bad)

2.2.1 Is it just a fresh topic (neutral)

2.2.3 Because he believes it’s a good idea (good)

Cross-reference that will other people recommending the same thing, and/or ask people for their opinion.

As an example, you don’t want to take your ICO recommendations from the CNBC’s show Crypto Trader. Why? Because those are sponsored, and the show is for entertainment purposes.

Analysis:

1.1 Success track record:

1.1.1 no track record (bad)

1.1.2 vague track record (neutral)

1.1.3 clear track record (good)

1.2 Prior (before crypto) engagement in financial markets

1.2.1 yes (good)

1.2.2 no (bad)

2.1 Only crypto market (neutral)

2.2. Broad understanding of financial markets (good)

In the crypto world, everybody’s a trader or knows a thing or two about technical analysis. And that’s a good thing, but chose carefully who you are listening to when it comes to putting your money on the line. Ideally, you shouldn’t be listening to anybody. You should learn how to do your own analysis or higher a certified financial analyst to consult you on your investments.

That’s it. Now next time you are searching for information you have a way to validate them. I encourage you to expand on this template and create your own. Incorporate things that you find important when it comes to evaluating your source. And remember: always do your own research.

Novice traders enter the Forex markets with the illusion of becoming independent and wealthy. And they may be right. So why 95% of forex traders fail?

After no trading

Novice traders enter the Forex markets with the illusion of becoming independent and wealthy. And they may be right. So why 95% of forex traders fail?

After no trading

Novice traders enter the Forex markets with the illusion of becoming independent and wealthy. And they may be right. So why 95% of forex traders fail?

After no trading plan and psychological weaknesses and biases comes Too high position sizing as the main cause for failure.

I guess that the become rich quick mentality, an evident psychological weakness, drives them to trade big at the wrong time. Then Fear and greed make the rest.

Therefore, my first recommendation for a new trader is to doubt about his strength to support the psychological pressure to break his system. That is much better accomplished if he or she risks small amounts. The initial two years of trading should be dedicated to learn and practice the needed discipline to respect the trading rules.

To help you take out your anxiety for a quick buck profit, Let’s analyse the power of compounding.

Let’s first see, graphically an account of 10,000 € grow at a monthly rate of 0,083%, a nominal annual rate of 1% for 50 years (600 months):

Well, we observe that this state of affairs is only good for the bankers. It takes 50 years to grow 10K into 16,500K. That’s the reason we are willing to risk trading.

Let suppose we get a risk-free 10% annual return instead, again, with monthly payments of 10%/12:

That is becoming interesting. One, we need to wait patiently for 50 years to become millionaires, and, two, we don’t know how much of that will be erased by inflation.

Let’s suppose we are investing ala Warren Buffett with an annual mean return of 26%, that, also steadily grows on a monthly basis. In this case, the graph is presented in semi-log scale for obvious reasons. The x-scale is in months while the y-scale says how many zeros has the account balance. For instance, 106 means the account has 1 followed by six zeros:

Now, that is another history! We see that in 50 years we will be as filthily rich as Warren Buffett et al. ! We observe, also, that we add one zero to our account roughly once every 100 months. Not Bad. We multiply by ten our stake every two years! And that is achieved with a mean monthly rate of return on our capital of 2.17%, which means we just need to make sure we get a daily return of 0.11%.

The problem is within us:

This one is the same equity curve than the previous one but in a linear scale. We observe that it shows an exponential line, and there resides our psychological problem: The net equity grows relatively slow at the beginning. We need four years to reach six zeros, but in another four years, we will be close to eight. That shows that the power of compounding is a long-distance race, not a sprint.

Things are not that perfect in trading. We don’t see nice curves up to richness. We should expect not only run-ups but, also drawdowns. Let’s observe the equity curve of a typical system using a nominal risk of 0.5% which takes, for simplicity, one trade per day, or 20 per month. And let’s put a magnifying glass on the first year of its history.

Starting Capital: 10,000

Mean ending Capital: 11,817

Capital % gain: 18.17%

Max drawdown: 2.64%

This is a real system, achievable, with the basic statistics as follows:

STRATEGY STATISTICS: Nr. of Trades: 143.00 gainers: 58.74% Profit Factor: 1.74 mean nxR: 1.22 Sample Stats Parameters: mean(Expectancy): 0.3070 Standard dev: 1.9994 VAN K THARP SQN: 1.5353

The monthly mean profit, using a 0.5% risk is 1.5%, which gives an annual growth of 18%. A bit less than what Warren Buffet has been performing. The nice feature is that using a 0.5% risk the max drawdown is 2.64%. Now, let’s see how fare this system, using exactly the same trade percent results when risk rises because we increase the position size:

Starting Capital: 10,000

Mean ending Capital: 18,910

Capital % gain: 89.10%

Max drawdown: 10.38%

Starting Capital: 10,000

Mean ending Capital: 42,615

Capital % gain: 326.15%

Max drawdown: 25.39%

Starting Capital: 10,000

Mean ending Capital: 118,032

Capital % gain: 1,080.32%

Max drawdown: 47.67%

Starting Capital: 10,000

Mean ending Capital: 308,888

Capital % gain: 2,988.88%

Max drawdown: 79.99%

Starting Capital: 10,000

Mean ending Capital: 124,613

Capital % gain: 1,146.13%

Max drawdown: 96.83%

45% Risk:

Starting Capital: 10,000

Mean ending Capital: 14,725

Capital % gain: 47.25%

Max drawdown: 99.53%

From the above examples we take that:

A simple approach to compute the preferred risk per position is to be prepared for a 10-15 consecutive losing streak.

Let’s suppose we want our drawdown to be limited to 20%. If our system statistics show that our percent winners are less than 50%, then we should be protected to at least 15 losers in a row. If our percent winners stats are above 50% and our mean reward-to-risk ratio is above 1, then we may settle for ten losers in a row.

The method to limit the risk is easy. We divide the drawdown amount by the losing streak number.

If we wanted to be protected of a 20 losing streak and our maximum decided drawdown is 20% then, 20%/20 tells us that we cannot risk more than 1% on each trade. In the case of a 15 losing streak, our max risk goes to 1.33%, and it goes to 2 in the case of a 10 figure.

Therefore, If you trade using 0.5% risk on your account, you make sure that your maximum drawdown halves, therefore it’s highly improbable that your drawdown moves above 10% of your current balance.

Below is a possible 30-year history of the sample system using 0.5% risk. Sometimes, the turtle wins to the rabbit, because a too fast rabbit may get hit by a bullet.

The information received by the Federal Open Market Committee (FOMC), since its meeting in January, has shown signs of further strengthening of the labour market and economic activity has been growing at moderate but solid rates. Job gains have grown strong in recent months, and the unemployment rate has remained at low levels. Recent data shows that the growth rate of household spending and business fixed investment has grown in 2018 at moderate rates after a large growth at the end of 2017.

On a twelve-month basis, overall inflation and inflation for items other than food and energy has remained below 2%. The economic outlook has improved in recent months due to the good results evidenced throughout 2017, and since the tax reform approved at the end of the same year.

The committee expected that with gradual adjustments in the monetary policy stance, the economy would continue to behave positively in the medium term and labour market conditions would remain robust. Regarding inflation and its annual base, the committee expected that in the short term this indicator would be close to 2% and that the bank’s goal would be met.

Due to the behaviour of the labour market, the main sectors of the economy and inflation, the committee decided to raise the target range of federal funds from 1.5% to 1.75%. The committee was explicit in that the monetary policy stance would remain accommodative as long as it was necessary for inflation to return to 2%.

This decision was in line with market expectations, so there was no strong reaction from the market. In the projections of the path of the interest rate, there is still no unanimity on what the next steps of the Federal Reserve will be as some expect a stronger policy, so they expect four increases during 2018. For other analysts, the path will continue the road stipulated so they expect only three increases during the current year.

The following graph shows the main projections of the committee. This graph shows economic growth above the natural long-term rate and the rates expected since the December meeting has improved. The unemployment rate also shows a very positive behaviour and is below the long-term rate. Regarding the different inflation measures, inflation is expected below the bank’s target for 2018, but very close to the target level, and for the next two years, an optimal inflation rate is expected according to the bank’s mandate.

Graph 82.Economic projections of Federal Reserve Board members and Federal Reserve Bank presidents, March 2018. Retrieved 23rd March 2018 from https://www.federalreserve.gov/monetarypolicy/files/monetary20180321a1.pdf

In the press conference, the president of the Federal Reserve Jerome Powell expressed that the decision to raise the target range of the interest rate marks another step in the normalisation of monetary policy, a process that has been underway for several years. But in his statements, some caution was evident and showing that the path of the interest rate considered only two more hikes in 2018.

Job gains averaged 240,000 per month in the last three months, which is a very positive rate and makes it possible for new workers to be absorbed. The unemployment rate remained at low rates in February, standing at 4.1%, while the rate of labour market participation increased.

According to Powell, that is a positive signal given that the economically active population is getting older, so this leads to the participation rate to the downside, but with the new entries this negative effect is offset by the entry of new workers.

Also, the president of the Federal Reserve has concluded that there are certain specific factors that have contributed to the greater economic growth observed in recent months and these are:

Regarding inflation, Powell was clear that inflation was still below 2% regardless of what measure was used. According to the president of the Federal Reserve, this was due to unusual price reductions that occurred in late 2016 and early 2017. But for Powell, as the months passed in 2018, these unusual events would disappear, and inflation would be very close to 2 %.

In his statements, the president of the Federal Reserve specified that, if the rates rose too slowly, this would increase the risk that monetary policy would have to adjust abruptly in the future if a shock should occur in the economy. At the same time, the committee wanted to prevent inflation from remaining below the target which could reduce the chances of acting quickly in the face of a recession in the US economy.

Finally, Powell pointed out that the reduction in the balance sheet that began in October was progressing smoothly. Only specific conditions of the economy could curb the normalisation of the balance sheet of the Federal Reserve. President Powell was emphatic that they would use the balance sheet in addition to the interest rate to intervene in the economy if a deep economic recession were to occur.

In conclusion, the federal committee decided to raise the federal funds rate as expected by the market due to the good performance of the economy which continued to grow at high rates and above the long-term level. Although inflation was not at the desired level, according to the committee, this was due to transitory effects that would fade over the months, and thus inflation would be in the target range.

As already mentioned, the economy showed good signs due to the labour market, so the bank decided to raise rates, but the committee remained cautious about the future of the economy because it was not ruled out that a recession would occur. According to the statements made at the press conference, some indecision was evident on the part of the committee as they evaluated the two possible scenarios against the interest rate.

If they raised it too quickly they could slow down the economy and thereby affect the labour market, which would lead to a drop in inflation, which would lead to a complex economic scenario as future increases would not be possible, and this would restrict the use of the monetary policy. On the contrary, If the committee raised it too slowly, a scenario could be generated where any economic shock, whether internal or external, could also affect the economic growth of the United States and limit future increases in the interest rate.

The market is still undecided if the FED will make two or three more rate hikes during the current year. Some analysts question why the Federal Reserve continues to raise rates if the inflation rate still shows no stability. For them, the central bank should be more cautious in its monetary policy because they could be in the second scenario where the economy still needs an accommodative policy so that the medium term could be limited future increases in the rate as well as the normalisation of the balance sheet.

Category: Fundamental Analysis, Intermediate, Currencies, economic cycles, Monetary Policy, Economy, Macroeconomics, Central Banks.

Key Words: Central Banks, Monetary Policy, Bank of Japan.

Tags: Macroeconomy, BoJ, Monetary policy, 2017.

At the January 2016 meeting, the Central Bank of Japan introduced negative interest rates, setting the reference rate at 0.1%. This negative rate meant that the central bank would charge commercial banks for some reserves deposited in Japan’s central financial institution. The measure was designed to encourage commercial banks to use their reserves to increase the supply of loans to consumers and investors in Japan, to reactivate the economy and overcome the deflation that the country was experiencing at that time.

This negative rate would not apply directly to the accounts that customers had with commercial banks so as not to affect the purchasing power of individuals or companies. It was not a measure taken impulsively since the Bank of Japan had been analysing what measures could boost the behaviour of inflation for several years.

The decision was made by the board of the bank in a split decision of 5 votes in favour of the measure, against four votes who did not agree to establish negative rates. In addition, the report issued summarising the meeting, stipulated that if it was necessary to delve into the negative rates territory, this measure would be only be implemented until the bank achieved its 2% goal.

This measure of establishing negative rates has not been common for the central banks of the world’s leading economies since there is no consensus on the possible effects of negative rates. A problem that had lasted for quite some time in Japan was the decline in the prices of goods and services so that consumers restricted their spending due to their expectations of prices in the future.

At the press conference, the governor of the Bank of Japan, Haruhiko Kuroda indicated that deflation coupled with a global economic slowdown led to an unprecedented policy for Japan. For many analysts, the decision to adopt negative rates was surprising, and it was not known how much this could influence the short and medium-term inflation rate.

The consensus for many analysts was that the Japanese economy did not grow at higher rates as well as inflation, not because of low supply of credits but because companies had pessimistic expectations about the future of the economy, so they preferred to postpone their investment decisions. Therefore, they hoped that the outlook would not change even with negative interest rates.

Specifically, the bank adopted a three-tier system in which the balance that commercial banks held in the central bank would be divided into three levels:

This multi-level system in the balances was intended to prevent an excessive decrease in the income of financial institutions derived from the implementation of negative interest rates.

As for the guidelines for money market operations, the bank decided in a vote of 8 to 1 in favour of conducting operations in the open market until the monetary base was increased annually by 80 trillion yen. The bank decided to make purchases of Japanese Government Bonds (JGB) so that the amount in circulation would increase its annual rate of around 80 trillion yen.

By early 2017, the bank confirmed that the interest rate in the short term would remain at -0.1% and for the long term it would be 0%, so the bank decided to continue buying Japanese Government Bonds to maintain the yields of the bonds at 0%. World economic growth was moderate, but the negative performance was for the emerging economies which remained lagging behind the growth of the developed economies.

The bank especially highlighted the US economy, which showed great strength in almost all its variables, ranging from household spending to exports to the labour market. Inflation was perhaps the only variable that had not shown the strength of other economic variables but was close to the objective of the FED of 2%.

Japanese exports improved, mainly by the automotive sector. Private consumption was expected to have a positive performance in 2017 due to a good performance of the labour market, and effects on wealth, given the growth of the stock index in Japan and the main economies of the world. Real estate investment also showed positive signs since the end of 2016.

Given these positive signs, the bank expected a moderate expansion of the economy in 2017 given a rise in domestic demand for goods and services, in addition to better global growth and the depreciation of the yen, which would continue to boost exports.

The committee recognised that there was a lack of strength for the inflation rate to be at 2%, so it was important for the bank to continue with its guidelines and its operations in the market in order to continue channeling inflation towards the objective set by the bank’s mandates. The committee cleared doubts about its increase in long-term rates given the rate hike that the FED carried out, being very clear that its monetary policy decisions would only be based on local inflation conditions and not on decisions of other central banks.

At the mid-year meeting in 2017, the bank decided to keep the negative interest rate of -0.1% in a vote of 7 to 2. In order to maintain the long-term interest rate at 0%, the bank decided to buy JGB at the same rate as it had already done by increasing its holdings by 80 trillion yen.

By mid-2017 the Japanese economy had returned to a moderate expansion, with a slight increase in exports as well as fixed investment in businesses. Private consumption still did not show positive signs despite a better outlook in the labour market with wages rising slightly. In terms of the consumer price index, its annual measurement was close to 0%, so the bank was far from its annual growth goal, but expectations were positive because they expected an upward trend of this indicator.

The bank said it would continue with the Quantitative and Qualitative Monetary Easing (QQE) program until inflation rises above 2% in a stable manner that would allow for a path of economic growth that is larger than expected until mid-2017.

At in the October 2017 meeting, the bank committee decided with a vote of 8 to 1 to keep the short-term interest rate at -0.1%. For the long-term interest rate, the Bank of Japan continued acquiring JGBs to keep the interest rate at 0% for the long term. In the reports, it was indicated that the vote was not unanimous because a member of the board needed more encouragement from the bank to reach the goal of 2% as soon as possible.

In the meeting held in October 2017, the bank continued with its monetary policy of negative interest rate established at -0.1%. Yields on 10-year Japanese government bonds were still zero given the intervention of the central bank. The Nikkei 225 index rose considerably during 2017 given high expectations in the corporate results of Japanese companies.

As for the yen, it depreciated against the dollar during the year due to the interest rate differential between both central banks. Regarding its parity with the euro, it did not fluctuate significantly during the year.

As in the January report, the performance of the global economy remained positive, especially in the United States, which maintained a robust growth rate with good employment rates and good dynamics in its domestic markets.

In Japan, the economy grew at moderate rates with good dynamics in the export sector that was positively boosted by world growth. Fixed investment in businesses showed signs of moderate growth mainly due to an improvement in corporate revenues, better financial conditions and a better expectation of economic growth in the following quarters.

The unemployment rate has remained at low levels between 2.5% and 3%, which has encouraged greater private and household spending. The behaviour of real estate at the end of 2017 showed flat signs and the industry showed a growing trend. Regarding inflation, the Consumer Price Index (CPI) for the main goods minus food showed figures between 0.5% and 1%, as shown in the following graph.

Graph 76.CPI Inflation Japan 2017.Retrieved 26th February 2017, from http://www.inflation.eu/inflation-rates/japan/historic-inflation/cpi-inflation-japan-2017.aspx

Although it is still not close to 2%, the behaviour of inflation has improved, and the bank’s expectations were that in the medium and long-term, inflation would be located at the bank’s target rate. It was clear to all board members that the engine of year-round growth was exports that benefited from a better global juncture.

If you compare the projections that the bank had in July and November, the projected inflation rate of prices decreased in November and was due to more pessimistic expectations about price growth and a reduction in mobile telephony, but the medium and long-term rates remained without modifications. For some members, there was still a long way to reach the goal of 2% due to an excess supply of capital and a labour market that still needed to be narrower, so that wage increases would be stronger.

In conclusion, given the behaviour of the economy during 2017, the committee determined that the economy needed monitoring continuously to achieve its goals in the coming years. The objective of inflation was met, but the board was satisfied with the macroeconomic development of Japan. For most of the members, it was clear that the monetary easing program should continue to support the different measures of inflation so that the expectations of businesses and households would change and spend more, boosting wages and prices.

There was also the concern that other banks were ending their monetary easing programs and in some cases, interest rates were rising, so this could put pressure on the yen’s exchange against other currencies. The monetary relaxation program had begun later in Japan, so the normalisation of its monetary policy could also take longer. Given these statements, it was easy to understand why the executive board still did not change the negative interest rates and its purchase of Japanese government bonds.

©Forex.Academy

The Bank Of Japan Financial System Report

The Bank of Japan publishes the Financial System Report twice a year in order to assess the stability of the Japanese financial system and facilitate communication with interested parties who are concerned about such stability. The bank provides a regular and comprehensive assessment of the financial system with emphasis on detailing the structure of the system and the policies taken to achieve a robust system.

The bank uses the results of the report to plan the policy to be followed, ensuring the stability of the financial system and provide guidelines and warnings to financial institutions. The bank uses the results of international regulation and supervisory discussions.

In the April 2017 report, the bank reported a notable rise in the prices of the main stock indices and interest rates after the election of the new president of the United States. In Japan, there was also a rise in the stock market and the Yen depreciated. The bank continued with its policy of Quantitative and Qualitative Monetary Easing with Yield Curve Control

The internal loans of the financial institutions in circulation had increased close to 3% annually. There were no signs of overheating in the activity of the financial system nor the real estate market. In general, the financial system had maintained good stability since the crisis of 2008. The capital ratios required by financial institutions were above the level requested by the central bank and had sufficient capital for the risk to which they were exposed.

The results of the macroeconomic stress test indicated that financial institutions as a whole could be considered strong and resistant to economic stress situations. Developments in profits and capital of each institution in these situations of stress varied showing more robust institutions than others.

For the bank, the rise in the US stock market reflected better expectations of the economy and the administration of the new government. As a result of these better expectations about the United States, the dollar appreciated against the major currencies of the world.

In terms of the European financial markets, the stock market had maintained a good general performance coupled with low volatility. The most volatile period of the last two years occurred after the referendum of U.K.

Regarding the monetary policy of the Japanese central bank, the short-term interest rate remained close to 0% or in negative territory. The yields of the Japanese Government Bonds (JGB) continued to show a normal behaviour with the guidelines of market operations where the interest rate had been set at -0.1% and the target on yields on 10-year bonds was 0%. In the following graph, you can see how the yield curve of the JGB was.

Graph 82. Long-Term JGB yields (10 years) and JGB yield curve. Retrieved 5th March 2018 from https://www.boj.or.jp/en/research/brp/fsr/data/fsr170419a.pdf https://www.boj.or.jp/en/research/brp/fsr/data/fsr170419a.pdf

As for the Japanese stock market, it had shown an upward trend thanks to the good global performance of the shares, mainly in Europe and the United States. Since the end of 2016 and in 2017, the Japanese index had shown a stable behaviour without major changes.

The amount of credit risk of the main financial institutions had shown a downward trend. This was the result of improving the quality of the loans, which reflected a better dynamic of the economy in general. The following graph shows the decreasing trend of the risk of the main banking institutions.

Graph 83. Credit risk among financial institutions. Retrieved 5th March 2018 from https://www.boj.or.jp/en/research/brp/fsr/data/fsr170419a.pdf

In the second report of the year in October 2017, the bank noted that global volatility in the main financial markets remained low, along with positive but moderate economic growth, despite geopolitical tensions with North Korea and the United States. There were no significant changes in capital flows including flows destined for emerging markets.

In Japan, the monetary policy followed an accommodative path and the trend of loans granted had slowed due to a higher cost of loans in foreign currencies. Regarding the local financial market, the rate of growth of loans grew to 3%, and the demand for loans by small companies had improved.

The bank did not observe any financial imbalance in the assets and the financial entities. They continued using accommodative policies granting loans without major restrictions to the economy.

The real estate market showed no signs of overheating, but there was evidence of high prices in some places in Tokyo. In the stress scenarios applied by the central bank, if the financial market faced complex situations and the risk spread to the real economy, this could affect the real estate market.

The bank also did not observe greater imbalances in financial institutions or economic activity, so most commercial banks had good ratios between debt and capital, which made them resistant to stress situations as in the first delivery of 2017. The banks were robust in capital and liquidity regardless of the scenario in which the economy was located, due to a good rebalancing of the portfolios of the banks that have faced a greater demand for loans.

The benefits of Japanese banks have been decreasing, but this is happening at a general level in developed economies due to an environment of low-interest rates which was implemented by banks after the 2008 crisis. In Japan, they have also seen a decrease in the margins of profit of the banks due to the high competition between banks by the market, and in recent years have seen more exits of the market than entries of new banks.

A significant risk that the bank observed was the continuation of low-interest rates in the main economies in the world, which led to greater liquidity in the markets and investors taking more risk than desired by the bank’s board. Given the above, stocks in the United States and Europe had reached record highs, and valuation indicators P/E (Price/Earnings ratio) had reached historically high levels.

As in the April report, the volatility of the financial markets was low, which could mean an excess of market confidence at current valuations and an excessive risk taken by investors, coupled with greater investor leverage. All this generated a greater risk than desired by the bank’s committee.

In terms of financial markets, the short and long-term interest rates remained stable as programmed by the monetary easing policy and share prices had risen moderately. The short-term interest rate remained in negative territory.

The Yen had depreciated against the Euro reflecting a decrease in uncertainties concerning political situations in Europe, and expectations of a reduction in the monetary policy of the European Central Bank (ECB). On the other hand, the Yen remained stable against the Dollar since the second half of 2017 and some investors expected an appreciation against the Dollar due to some political risks in the United States.

Finally, in the bank’s report, the committee stated that financial institutions had continued to increase their balance sheets reflecting an increase in deposits and the rebalancing of portfolios including risky assets. Assets and total debts of financial institutions increased to 236 trillion yen since 2012, and the portfolio was continuously balanced between bonds and shares.

In conclusion, with the reports issued in 2017 by the Bank of Japan, the financial system was resistant to stress situations tested by the bank, although as in most countries there are banks with better asset quality and portfolios, there are always recommendations for some specific banks. The economy grew moderately during 2017, and monetary policy remained accommodative to encourage banks to grant more loans and thus generate more growth which in the medium term would lead to inflation at 2%.

As mentioned previously, the bank saw some risks in international markets due to a euphoria unleashed, mainly in the stock markets, which could generate imbalances in the real estate sector of the economy. Regarding the Yen with respect to other currencies, the behaviour was stable during the year, although there were slight depreciations concerning the Euro and the Dollar.

©Forex.Academy

Japan’s economic outlook

Category: Fundamental analysis, Intermediate, Currencies, economic cycles, Monetary Policy, Economy, Macroeconomics, Central Banks.

Key Words: Central Banks, Monetary Policy, Bank of Japan, Projections.

At each meeting of the bank’s board, a review is made of the state of the Japanese economy, the projections for the current year and the next two years, and the risks to which the economy is exposed both internally and externally.

In the April 2017 report, the board concluded that the economy would continue its positive trend growing above the potential stipulated by the bank, due to better internal financial conditions, some government stimulus and greater global economic growth. The bank was explicit that the expected growth in 2017 and 2018 would be higher than in 2019 due to a cyclical slowdown in fixed investment in business and an increase in the consumption tax that had already been programmed.

As global growth had generally improved, Japanese exports had shown an upward trend, contributing to economic growth. Private consumption had also been resilient due to a better outlook in the labour market with better employment rates and higher wages.

As already mentioned, the bank expected that by 2019 the local economy would slow down a little due to a slowdown in domestic demand reflecting the closing of the cycle of expansion in business investment in addition to the increase in consumption tax since that year.

Regarding inflation, the annual change in the CPI (Consumer Price Index) excluding fresh food continued to show better figures than in 2016 with a clear upward trend thanks to a better performance of the economy and an increase in expectations medium and long term. But even the price growth is not as strong as the bank would like so they followed the price index with some caution.

The annual CPI for April excluding food and energy was close to 0%, so the bank was still expectant that the price index was far from the target rate of 2%.

Regarding monetary policy, the bank indicated that it would continue to apply Quantitative and Qualitative Monetary Easing with the Yield Curve control, with the objective of using it until inflation hit 2% so that the short-term interest rate would remain in negative territory.

Inflation could reach 2% in the medium and long-term, but not in the short term due to the weak behaviour of the main price indices. It was estimated that in the medium and long term it could reach 2% due to better economic growth rates added to energy prices that have been rising in recent years. In addition, the policy of monetary easing continued to drive the supply of credit and liquidity to the market so that inflation continued to rise to the bank’s target figure.

Also, the unemployment rate continued to decrease showing figures between 2.5 and 3%, so the labour market was narrowing which could generate an increase in the nominal wages of people, which in turn could lead people to consume more and this would boost inflation. The following two graphs show the main projections of the members of the committee and the expected behaviour of the CPI until 2019.

Graph 77. Forecasts of the majority of Policy Board Members. Retrieved 27th February 2018 from https://www.boj.or.jp/en/mopo/outlook/gor1704b.pdf

Graph 78. CPI (ALL ITEMS LESS FRESH FOOD. Retrieved 27th February 2018 from https://www.boj.or.jp/en/mopo/outlook/gor1704b.pdf

In the July report, the committee stated that the path of economic growth was still positive due to the already exposed factors of a better global panorama and incentives created by the government to stimulate the local economy.

Regarding inflation, there were negative signals that showed a weak CPI (excluding food and energy prices), being in figures between 0 and 0.5%. The bank indicated that it could be due to the caution that the companies had at the time of fixing the prices and the wages of their workers. This behaviour of the companies caused expectations to decrease somewhat on inflation in the medium and long-term. The bank stressed that for inflation to reach 2% companies had to be more determined when setting prices and wages.

What was driving inflation in recent months were energy prices due to higher global demand for fuels and the agreements reached by OPEC to sustain oil prices, which is why the bank was concerned that the other components of the prices were not contributing to the rise of the recent CPI.

Due to the weakness of inflation, the bank decided that it would continue with its policy of monetary easing until inflation was close to levels close to 2%, so that short and medium-term interest rates would remain in negative territory. In addition, the financial market continued to offer credit facilities to the market.

Despite the weak performance, in the bank’s projections, it was estimated that in the medium and long-term the inflation rate would be at 2%, but the projections had fallen slightly on this variable for the next two years.

In the October 2017 report, the bank’s committee continued to observe a positive performance of the economy due to higher exports thanks to the better performance of the world economy throughout 2017.

In terms of domestic demand, fixed investment in business had followed a slight upward trend with better profits from companies and better expectations of entrepreneurs on the Japanese economy.

Private consumption continued to grow moderately, thanks to the better performance of the labour market. There were good rates of job creation and wages rose slightly. Public investment had also had positive behaviour during the last quarter, but not spending by households that had shown flat figures throughout the year.

Looking at the financial conditions, the outlook did not change with respect to the two previously issued reports, since the short and medium-term rates remained in negative territory. Financial institutions were still willing to lend to the market, and corporate bonds were still well received by the market, so the bank continued to observe the accommodative financial conditions.

Although inflation continued to rise slightly as in mid-2017, this behaviour was mainly explained by the rise in fuel prices and energy in general. The weak behaviour of the CPI excluding food and energy was due to the little increase in prices of companies as well as wages and a mobile phone market increasingly competitive in prices.

If you compare the projections that the bank had in October with the projections at the beginning of 2017, the CPI showed a weaker than expected behaviour, but it was expected that in 2018 and 2019 inflation would have more positive figures as shown in the following graph.

Graph 79, CPI (ALL ITEMS LESS FRESH FOOD, Retrieved 27th February 2018 from https://www.boj.or.jp/en/mopo/outlook/gor1710b.pdf

The reasons for a better performance of the CPI for the following years should be given thanks to better conditions in the labour market, better performance of the economy in general and better market expectations. The graph shows that inflation bottomed out at the end of 2016, showing deflationary signs.

The risks faced by the Japanese economy according to the bank were:

These factors could affect the decline of the Japanese economy due to its direct involvement in world trade. The following graph shows the bank’s projections at the October meeting.

Graph 80. Forecasts of the majority of Policy Board members. Retrieved 27th February 2018 from https://www.boj.or.jp/en/mopo/outlook/gor1710b.pdf

If these projections are compared with those made at the beginning of the year and July, expectations for 2017 and 2018 improved and remained the same for 2019. That shows the good performance of the economy and a slight recovery of inflation, but as the bank reaffirmed that recovery was not robust since it was mainly based on energy prices. The other components of the CPI did not yet show positive figures, so the bank expected 2019 to be close to 2%.

As long as the inflation rate was not close to 2%, the monetary easing policy would continue. That would include negative interest rates and acquisitions, and corporate bonds to provide liquidity to the market and thus achieve better growth rates. This would encourage companies to be more aggressive in its increases in prices and wages of workers, which was not as strong as would be expected from a narrow labour market, although they did rise during 2017.

The following graph shows the CPI excluding food and energy which shows that the figure during 2017 was well below 0.5% which is negative and gives the reason why the bank committee was concerned because the basic items of the index showed a very weak behaviour.

Graph 81. Chart 38, CPI. Retrieved 27th February 2018 from https://www.boj.or.jp/en/mopo/outlook/gor1710b.pdf

©Forex.Economy

In the quarterly reports of the Federal Reserve balance sheet, it is possible to appreciate the composition of the assets, obligations, capital and financial information of the Federal Reserve. With these reports that are issued quarterly, it is possible to analyse how the portfolio of the Federal Reserve is composed as well as to give some clues as to what the monetary policy will be like. It is important to remember that for the Federal Reserve, the main monetary policy tool is the interest rate of the federal funds and a secondary tool is a modification in their assets that are reported in the balance sheet.

In the March report of the balance sheet of the Federal Reserve, it was stated that since 2009 the Federal Reserve had the power to carry out Open Market Operations (OMOs) in the domestic market. These operations included limited purchase and sale of:

The OMOs have historically been used by the Federal Reserve to adjust the supply of reserve balances, as well as to maintain the federal funds rate close to the objective established by the Federal Open Market Committee (FOMC).

In addition, in recent years the Federal Reserve has implemented other tools to provide liquidity in the short term to domestic banks and other depository institutions through the discount window.

Between October 2016 and February 2017, the System Open Market Account’s (SOMA) holdings of Treasury securities changed little due to the FOMC policy of rolling over maturing Treasury securities at auction.

In this period of time, the SOMA’s holdings of agency debt decreased due to the maturity of the bonds. On the other hand, MBS increased due to the reinvestment of the main payments. The agency mortgage-backed securities were assets acquired by the bank to provide support to the real estate and housing market after the 2008 crisis and thereby also provide security in the US financial market.

The MBS are financial instruments traded in the capital markets, whose value and flow of payments are guaranteed by a portfolio of mortgage loans, generally residential property. The fact that the Federal Reserve resorted to this type of unconventional monetary policy was to avoid the deflationary risk and give a boost to the economy that had been sunk since 2008.

From 2009 to 2014 the expansion of SOMA securities holdings was driven by a series of large-scale asset purchase programs(LSAPs) that were conducted to give an impulse to the housing market and give a boost to the economy from the financial system.

In the graphs of the article, it is observed that there was not a significant change between the previous reports and the one of the first quarter of 2017 where there have not been large acquisitions, but there has not been any reduction of the assets since it is complemented with the monetary policy reports. normalisation of the balance sheet was to begin until the end of 2017 and various statements have given hints that this normalisation will be very slow to not affect the credit market and therefore the economy.

In the May report, SOMA’s holdings did not show large changes in line with market expectations, which still did not expect any reduction in holdings. Agency debt holdings decreased between February and April due to the maturity of the bonds and the holding of MBS also decreased due to a difference between the payment times of the principal and when this amount was reinvested.

Since mid-2014 the Federal Reserve was one of the first central banks to issue press releases informing the market of its intentions to begin the normalisation of the balance sheet since the objective of this unconventional policy had been achieved for the bank. After knowing these intentions, the markets were a little volatile given that this would mean less liquidity for the market and for the financial system, and possibly higher interest rates for banks which would lead investors to review their portfolios.

In the August report, there were few changes in the bank’s assets due to the policy of reinvesting the treasury securities in auctions. The agency debt decreased as in the previous reports due to the maturity of the bonds while the MBS did not change its amount in the balance. If this report is complemented with the monetary policy report of the Federal Reserve, the market concluded that the normalisation program would begin in October. In addition, the last rise in the interest rate of the federal funds occurred at the June meeting so the market had a great expectation regarding the normalisation of monetary policy in general and that included the balance sheet.

In the report of the fourth quarter of 2017 the FED explained that since the 20th of September 2017, the FOMC had announced that in October it was going to start the normalization program of the balance sheet where its assets were going to be reduced gradually due to the reduction in the reinvestment of the main payments received from the securities held by the SOMA. Principal payments will be reinvested only to the extent that the established limits would be exceeded.