Understanding statistics is one of the fundamental skills required for quantitative analysis. Today’s article discusses two basic concepts: Distribution and probability. The two concepts are closely related. The concept of probability provides support for mathematical calculations and distributions help us visualize what is happening with the data.

Frequency Distribution and Histograms

Let’s start with the simplest part: A distribution is simply a way to describe the pattern of the data.

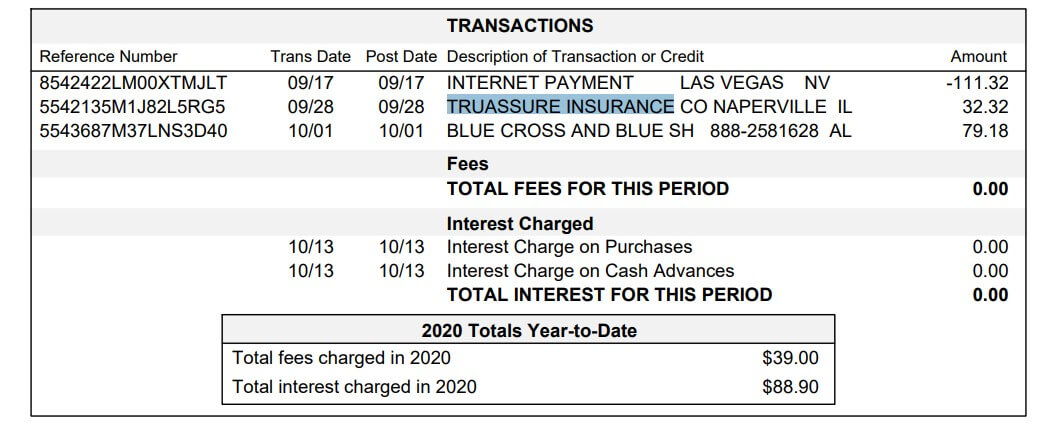

A simple example: we think of the daily performance of a stock exchange or the results of a backtest. These returns are our sample data.

To have a clearer view of these yields or returns we can classify them in intervals with an identical size and also count the number of observations of each interval. If we represent these results in a graph we will get what in statistics is called a frequency histogram. Histograms allow us to have an overview of how returns have been distributed.

In addition, from this frequency distribution, we will be able to know its measures of the central trend of our sample.

– The value at the center of our histogram indicates the arithmetic mean of the data (the mean yield).

– The median part of the distribution in two leaving the same amount of values aside.

We can also see how variable the results have been (dispersion measures). Volatility of returns is measured with standard deviation or standard deviation. Finally, we can also see the shape of the distribution: if it is a symmetrical distribution, if it has “fatter tails” (read more extreme results) than it should, etc.

Let’s analyze these features in detail:

- Characteristics of a distribution

- Statistical asymmetry

A very important aspect is the symmetry of the distribution. “If a distribution is symmetrical, there are the same number of values on the right as on the left of the mean, hence the same number of deviations with a positive sign as with a negative sign.

We say there is positive asymmetry (or right) if the “tail” to the right of the mean is longer than the left, that is if there are values more separated from the mean to the right. We will say that there is negative (or left) asymmetry if the “tail” to the left of the mean is longer than the one to the right, that is, if there are more separate values from the mean to the left. (Wikipedia)

When we talk about trading systems, a system can have a negative or positive asymmetry according to its characteristics. For me, the most obvious example is when we analyze the distribution between the results of a trend system compared to the results of a reverse-to-mean system. In the first case, our sample would have a positive symmetry (when it succeeds it gains a lot and returns move away from the average mean value when it misses little and the values to the left of the mean are not far from it). In the second case, it would be the other way around.

Tannosis

Tannosis is a statistical measure that determines the degree of concentration of the values of a distribution around its mean. The tannosis coefficient indicates whether the distribution has “heavy” tails, that is, whether or not the extreme values concentrate a high frequency. The coefficient measures the “degree of pointing or flattening of the tails” with respect to the normal distribution. So if we take the normal distribution as a reference, a distribution can be leptocúrtica, platicúrtica, or mesocúrtica.

Probability Distribution

So far we have simply been analyzing our sample data (in the example, the results of operations) using descriptive statistics. However, when working with data we look for more than just describing it. We’re looking to predict how that dataset will behave in the future. For this, we use probability theory and inferential statistics. From the results of a sample, we seek to draw conclusions for the total population.

Formal Definition: What is a Probability Distribution

If we go to Wikipedia, we can learn that:

In probability and statistical theory, the probability distribution of a random variable is a function that assigns to each event defined on the variable the probability that such an event will occur. The probability distribution is defined over the set of all events and each of the events is the range of values of the random variable. It can also be said to have a close relationship with frequency distributions. In fact, a probability distribution can be understood as a theoretical frequency, as it describes how results are expected to vary.

The probability distribution is completely specified by the distribution function, whose value in each real x is the probability that the random variable call is less than or equal to x. (Source: Wikipedia)

How can we interpret this on a practical level? If the returns in our sample match the normal distribution, then the mean and standard deviation is all we need to calculate probabilities about profitability and risk. A little further down in the article, we explain this in more detail.

Normal Distribution and Probability Models

There are numerous types of variable distribution. In this article, we will only talk about the normal distribution, which is the most known type of distribution and on which most probability models are based. Only 2 parameters are needed to describe it: the arithmetic mean (which defines the central value) and the standard deviation (which describes the width of the bell).

In order to model the risk, it is only necessary to know the mean and the standard deviation. This is because the probability distribution assigns a probability to each possible outcome of an experiment. The probability function mentioned earlier in the Wikipedia extract is a mathematical concept that allows us to use the area below the curve to represent the probability space.

We can intuitively understand that those values that are more distant from the average are repeated less often, while those values closer to the average are much more frequent. In this way, probability intervals can be defined within which we can find the profitability of the total sample. This type of analysis uses the VaR (Value at Risk) model to assess the probability of an investment’s risk.

Volatility, which in this case is measured by the value of the standard deviation, is a measure of uncertainty (risk). This uncertainty is directly related to the probability of obtaining a return equal to the expected return (the average).

For the same expected yield, the curve flattens when volatility is greater while it becomes thinner and higher when volatility decreases. An asset whose profitability has a higher standard deviation is considered more volatile, and therefore riskier than an asset with lower volatility.

Other Notes

When we talk about the distribution of the entire population, the properties (mean, standard deviation, etc.) are parameters. When we talk about the distribution of the sample, the properties are statistics.

Why use statistical distributions to measure risk, if in the end, the results do not conform to a distribution model? Because you’re working with models. Having a theoretical framework in which to base a quantitative investment strategy adds solidity to the whole.